I just came across a Channel 9 interview Matt Deacon did with me at the Architect Insight Conference in London a couple of weeks ago. It followed a presentation I gave on the importance of identity in cloud computing. Matt keeps my explanation almost… comprehensible – readers may therefore find it of special interest. Video is here.

Category: Identity

Sorry Tomek, but I “win”

As I discussed here, the EFF is running an experimental site demonstrating that browsers ooze an unnecessary “browser fingerprint” allowing users to be identified across sites without their knowledge. One can easily imagine this scenario:

- Site “A” offers some service you are interested in and you release your name and address to it. At the same time, the site captures your browser fingerprint.

- Site “B” establishes a relationship with site “A” whereby when it sends “A” a browser fingerprint and “A” responds with the matching identifying information.

- You are therefore unknowingly identified at site “B”.

I can see browser fingerprints being used for a number of purposes. Some sites might use a fingerprint to keep track of you even after you have cleared your cookies – and rationalize this as providing added security. Others will inevitably employ it for commercial purposes – targeted identifying customer information is high value. And the technology can even be used for corporate espionage and cyber investigations.

It is important to point out that like any fingerprint, the identification is only probabilistic. EFF is studying what these probabilities are. In my original test, my browser was unique in 120,000 other browsers – a number I found very disturbing.

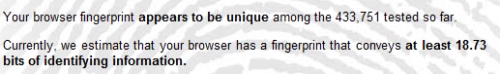

But friends soon wrote back to report that their browser was even “more unique” than mine! And going through my feeds today I saw a post at Tomek's DS World where he reported a staggering fingerprint uniqueness of 1 in 433,751:

It's not that I really think of myself as super competitive, but these results were so extreme I decided to take the test again. My new score is off the scale:

Tomek ends his post this way:

“So a browser can be used to identify a user in the Internet or to harvest some information without his consent. Will it really become a problem and will it be addressed in some way in browsers in the future? This question has to be answered by people responsible for browser development.”

I have to disagree. It is already a problem. A big problem. These outcomes weren't at all obvious in the early days of the browser. But today the writing is on the wall and needs to be addressed. It's a matter right at the core of delivering on a trustworthy computing infrastructure. We need to evolve the world's browsers to employ minimal disclosure, releasing only what is necessary, and never providing a fingerprint without the user's consent.

More unintended consequences of browser leakage

Joerg Resch at Kuppinger Cole points us to new research showing how social networks can be used in conjunction with browser leakage to provide accurate identification of users who think they are browsing anonymously.

Joerg writes:

Thorsten Holz, Gilbert Wondracek, Engin Kirda and Christopher Kruegel from Isec Laboratory for IT Security found a simple and very effective way to identify a person behind a website visitor without asking for any kind of authentication. Identify in this case means: full name, adress, phone numbers and so on. What they do, is just exploiting the browser history to find out, which social networks the user is a member of and to which groups he or she has subscribed within that social network.

The Practical Attack to De-Anonymize Social Network Users begins with what is known as “history stealing”.

Browsers don’t allow web sites to access the user’s “history” of visited sites. But we all know that browsers render sites we have visited in a different color than sites we have not. This is available programmatically through javascript by examining the a:visited style. So malicious sites can play a list of URLs and examine the a:visited style to determine if they have been visited, and can do this without the user being aware of it.

This attack has been known for some time, but what is novel is its use. The authors claim the groups in all major social networks are represented through URLs, so history stealing can be translated into “group membership stealing”. This brings us to the core of this new work. The authors have developed a model for the identification characteristics of group memberships – a model that will outlast this particular attack, as dramatic as it is.

The researchers have created a demonstration site that works with the European social network Xing. Joerg tried it out and, as you can see from the table at left, it identified him uniquely – although he had done nothing to authenticate himself. He says,

“Here is a screenshot from the self-test I did with the de-anonymizer described in my last post. I´m a member in 5 groups at Xing, but only active in just 2 of them. This is already enough to successfully de-anonymize me, at least if I use the Google Chrome Browser. Using Microsoft Internet Explorer did not lead to a result, as the default security settings (I use them in both browsers) seem to be stronger. That´s weird!”

Since I’m not a user of Xing I can’t explore this first hand.

Joerg goes on to ask if history-stealing is a crime? If it’s not, how mainstream is this kind of analysis going to become? What is the right legal framework for considering these issues? One thing for sure: this kind of demonstration, as it becomes widely understood, risks profoundly changing the way people look at the Internet.

To return to the idea of minimal disclosure for the browser, why do sites we visit need to be able to read the a:visited attribute? This should again be thought of as “fingerprinting”, and before a site is able to retrieve the fingerprint, the user must be made aware that it opens the possibility of being uniquely identified without authentication.

All the help we can get

Now that the world is so thoroughly post-modern, how often do you come across information that qualifies as unexpected? Well, I have to say that the following story , appearing in the The Australian, left me wide-eyed:

Now that the world is so thoroughly post-modern, how often do you come across information that qualifies as unexpected? Well, I have to say that the following story , appearing in the The Australian, left me wide-eyed:

Yesterday, in the church of the City of London Corporation, (Canon Parrot) presented an updated version of Plow Monday, an observance that dates from medieval times. On this day, the first Monday after Twelfth Night, farm labourers would bring a plough to the door of the church to be blessed.

“When I arrived a few months ago I looked at this service and thought, ‘Why do we have a Plow Monday?’,” Canon Parrott said. Men and women coming to his church no longer used ploughs; their tools were their laptops, their iPhones and their BlackBerries.

So he wrote a blessing and strode out to deliver it before a congregation of 80, the white heat of technology shining from his every pronouncement. “I invite you to have your mobile phone out … though I would like you to put it on silent,” he said.

This was Church 2.0. Behind him, the altar resembled a counter at PC World. Upon it, laid out like holy relics, were four smart phones, one Apple laptop and one Dell.

Then, after another hymn, came the blessing of the smart phones. The Lord Mayor of London offered his BlackBerry to Canon Parrott, which was received with due reverence and placed upon the altar.

The congregation held their phones in the air, and Canon Parrott addressed the Almighty. “By Your blessing, may these phones and computers, symbols of all the technology and communication in our daily lives, be a reminder to us that You are a God who communicates with us and who speaks by Your Word. Amen.”

It makes me wonder what Innis said to McLuhan when he read abut this.

Le Figaro carried a report of an additional prayer, “”May our tongues be gentle, our e-mails be simple and our websites be accessible”.

Perhaps it is asking too much, but I would have really liked Father Parrott to add, “websites be accessible and secure.” After all – it can't hurt. Perhaps next time?

Federation with ADFS in Windows Server 2008

Steve Riley at Amazon takes a fascinating and non-ideological approach on his new blog. The combination will keep me tuned in – I expect others will feel the same way. He writes:

“As I've talked with customers who have deployed or plan to deploy Windows Server 2008 instances on Amazon EC2, one feature they commonly inquire about is Active Directory Federation Services (ADFS). There seems to be a lot of interest in ADFS v2 with its support for WS-Federation and Windows Identity Foundation. These capabilities are fully supported in our Windows Server 2008 AMIs and will work with applications developed for both the “public” side of AWS and those you might run on instances inside Amazon VPC.

“I'd like to get a better sense of how you might use ADFS. When you state that you need “federation,” what are you wanting to do? I imagine most scenarios involve applications on Amazon EC2 instances obtaining tokens from an ADFS server located inside your corporate network. This makes sense when your users are in your own domains and the applications running on Amazon EC2 are yours.

“Another scenario involves a forest living entirely inside Amazon EC2. Imagine you've created the next killer SaaS app. As customers sign up, you'd like to let them use their own corpnet credentials rather than bother with creating dedicated logons (your customers will love you for this). You'd create an application domain in which you'd deploy your application, configured to trust tokens only from the application's ADFS. Your customers would configure their ADFS servers to issue tokens not for your application but for your application domain ADFS, which in turn issues tokens to your application. Signing up new customers is now much easier.

“What else do you have in mind for federation? How will you use it? Feel free to join the discussion. I've started a thread on the forums, please add your thoughts there. I'm looking forward to some great ideas.”

I really look forward to this. Let's see where it goes…

Given the mail I get from mutual customers, I know Steve will end up with some interesting insights.

Bizzare customer journey at myPay…

Internet security is a sitting duck that could easily succumb to a number of bleak possible futures.

One prediction we can make with certainty is that as the overall safety of the net continues to erode, individual web sites will flail around looking for ways to protect themselves. They will come across novel ideas that seem to make sense from the vantage point of a single web site. Yet if they implement these ideas, most of them will backfire. Internet users have to navigate many different sites on an irregular basis. For them, the experience of disparate mechanisms and paradigms on every different site will be even more confusing and troubling than the current degenerating landscape. The Seventh Law of Identity is animated by these very concerns.

I know from earlier exchanges that Michael Ramirez understands these issues – as well as their architectural implications. So I can just imagine how he felt when he first encountered a new system that seems to represent an unfortunately great example of this dynamic. His first post on the matter started this way:

“Logging into the DFAS myPay site is frustrating. This is the gateway where DoD employees can view and change their financial data and records.

“Logging into the DFAS myPay site is frustrating. This is the gateway where DoD employees can view and change their financial data and records.

“In an attempt secure the interface (namely to prevent key loggers), they have implemented a javascript-based keyboard where the user must enter their PIN using their mouse (or using the keyboard pressing tab LOTS of times).

“A randomization function is used to change the position of the buttons, presumably to prevent a simple click-tracking virus from simply replaying the click sequence. Numbers always appear on the upper row and the letters will appear in a random position on the same row where they exist on the keyboard (e.g. QWERTY letters will always appear on the top row, just in a random order).

“At first glance, I assumed that there would be some server-side state that identified the position of the buttons (as to not allow the user's browser to arbitrarily choose the positions). Looking at how the button layout is generated, however, makes it clear that the position is indeed generated by the client-side alone. Javascript functions are called to randomize the locations, and the locations of these buttons are included as part of the POST parameters upon authentication.

“A visOrder variable is included with a simple substitution cipher to identify button locations: 0 is represented by position 0, 1 by position 1, etc. Thus:

“Thus any virus/program can easily mount an online guessing attack (since it defines the substitution pattern), and can quickly decipher the PIN if it has access to the POST parameters.

“The web site's security implementation is painfully trivial, so we can conclude that the Javascript keyboard is only to prevent keyloggers. But it has a number of side effects, especially with respect to the security of the password. Given the tedious nature of PIN entry, users choose extremely simplistic passwords. MyPay actually encourages this as it does not enforce complexity requirements and limits the length of the password between 4 and 8 characters. There is no support for upper/lower case or special characters. 36 possible values over an 4-character search space is not terribly secure.”

A few days later, Michael was back with an even stranger report. In fact this particular “user journey” verges on the bizarre. Michael writes:

“MyPay recently overhauled their interface and made it more “secure.” I have my doubts, but they certainly have changed how they interact with the user.

“I was a bit speechless. Pleading with users is new, but maybe it'll work for them. Apparently it'll be the only thing working for them:

Although most users have established their new login credentials with no trouble, some users are calling the Central Customer Support Unit for assistance. As a result, customer support is experiencing high call volume, and many customers are waiting on hold longer than usual.

We apologize for any inconvenience this may cause. We are doing everything possible to remedy this situation.

Michael concludes by making it clear he thinks “more than a few” users may have had trouble. He says, “Maybe, just maybe, it's because of your continued use of the ridiculous virtual keyboard. Yes, you've increased the password complexity requirements (which actually increased security), but slaughtered what little usability you had. I promise you that getting rid of it will ‘remedy this situation.'”

One might just shrug one's shoulders and wait for this to pass. But I can't do that. I feel compelled to redouble our efforts to produce and adopt a common standards-based approach to authentication that will work securely and in a consistent way across different web sites and environments. In other words, reusable identities, the claims-based architecture, and truly usable and intuitive visual interfaces.

OpenID and Information Cards at the NIH

Drummond Reed writes about real progress by the National Institute of Health in making their sites accessible through what the U.S. government has started to call Open Identities. The decision by the NIH and the U.S. administration to leverage existing identity infrastructures is tremendously interesting – it turns the usual paradigm for government identity on its head. Drummond, who is Executive Director of the Information Card Foundation, writes:

Bethesda, MD, USA – The first iTrust Forum, held today at the National Institute of Health (NIH) headquarters in Bethesda, MD, featured a four-part session about the U.S. government’s Open Identity for Open Government Initiative. NIH is leading government adoption of this initiative through the NIH Federated Identity Service. NIH demonstrated the first production use of open identity technologies at the iTrust Forum by showing how the Federated Identity Service now accepts logins from several of the ten OpenID and Information Card identity providers who have announced participation in the initiative.

In a separate demonstration, Don Schmidt of Microsoft showed a prototype “multi-protocol selector” – software that will enable users to do both OpenID and Information Card registration/login to websites through one simple, safe, visual interface. This will make authentication at many different websites dramatically simpler for users while at the same time providing strong protection against the main source of phishing attacks.

ICF Executive Director Drummond Reed and OpenID Foundation Executive Director Don Thibeau presented the Open Identity Framework (OIF), a new open trust framework model being developed jointly by the ICF and OIDF to solve the problem of how third-party portable identity credentials such as OpenID and Information Cards can be trusted in very large deployments, such as across the entire U.S. population and all U.S. government websites.

As described in the two foundation’s first joint white paper, the OIF is being developed to meet the requirements of the U.S. ICAM Trust Framework Provider Adoption Process (TFPAP). It applies the principles of open source software and open community development to the definition and deployment of trust frameworks for multiple trust communities around the world. It will allow identity providers to be certified for compliance with the levels of assurance (LOA) required by relying party websites, while also allowing relying parties to be certified for compliance with the levels of protection (LOP) that may be required by identity providers and the users they represent.

The OIF also applies market forces to certification and accountability by enabling identity providers and relying parties to make their own choice of assessor and auditor, provided they meet the qualifications specified by the trust framework for which they will provide assessment or auditing services.

The end goal of the Open Identity for Open Government Initiativeat NIH and its Center for Information Technology (CIT) is to give users of NIH websites and other electronic resources the ability to have a single account and login procedure that will allow access to all NIH applications, as well as other government and private sector applications. This will make it easier for users to access information resources, remove the responsibility for authentication from website and application owners, and improve security.

The Open Identity initiative is already expanding to other U.S. government agencies beyond NIH, including the Food and Drug Administration (FDA) and the General Services Administration (GSA). The Library of Congress has also expressed an interest in joining.

The ICF congratulates the achievements of the NIH Federated Identity team, led by Debbie Bucci, Valerie Wampler, Jane Small, Jim Seach, Tom Mason, and Peter Alterman, who were recognized with both the 2008 NIH Director’s Award and the Government Information Technology Executive Council (GITEC) 2009 Project Management Excellent Award.

Identity Roadmap Presentation at PDC09

Earlier this week I presented the Identity Keynote at the Microsoft Professional Developers Conference (PDC) in LA. The slide deck is here, and the video is here.

After announcing the release of the Windows Identity Foundation (WIF) as an Extension to .NET, I brought forward three architect/engineers to discuss how claims had helped them solve their development problems. I chose these particular guests because I wanted the developer audience to be able to benefit from the insights they had previously shared with me about the advantages – and challenges – of adopting the claims based model. Each guest talks about the approach he took and the lessons learned.

Andrew Bybee, Principal Program Manager from Microsoft Dynamics CRM, talked about the role of identity in delivering the “the Power of Choice” – the ability for his customers to run his software wherever they want, on premises or in the cloud or in combination, and to offer access to anyone they choose.

Venky Veeraraghavan, the Program Manager in charge of identity for SharePoint, talks about what it was like to completely rethink the way identity works in Sharepoint so it takes advantage of the claims based architecture to solve problems that previously had been impossibly difficult. He explores the problems of “Multi-hop” systems and web farms, especially the “Dreaded Second Hop” – which he admits “really, really scares us…” I find his explanation riveting and think any developer of large scale systems will agree.

Dmitry Sotnikov, who is Manager of New Product Research at Quest Software, presents a remarkable Azure-based version of a product Quest has previously offered only “on premise”. The service is a backup system for Active Directory, and involved solving a whole set of hard identity problems involving devices and data as well as people.

Later in the presentation, while discussing future directions, I announce the Community Technical Preview of our new work on REST-based authorization (a profile of OAuth), and then show the prototype of the mutli-protocol identity selector Mike Jones unveiled at the recent IIW. And finally, I talk for the first time about “System.Identity”, work on user-centric next generation directory that I wanted to take to the community for feedback. I'll be blogging about this a lot and hopefully others from the blogosphere will find time to discuss it with me.

New test results for SAML Profile For eGovernment

The success of the Identity Metasystem depends heavily on having products available from multiple vendors that are proven to interoperate and ready to deploy. Kantara Initiative and Liberty Alliance have contributed significantly to this by helping test products against specific profiles. Kudos to everyone involved with the definition, organization and testing of the eGovernment SAML 2.0 profile v1.5. This represents a real step forward given the diversity of products involved.

SAN FRANCISCO, Sept. 30 — Kantara Initiative and Liberty Alliance today announced that identity products from Entrust, IBM, Microsoft, Novell, Ping Identity, SAP and Siemens have passed Liberty Interoperable(TM) SAML 2.0 interoperability testing. These vendors participated in the third Liberty Interoperable full-matrix testing event to be administered by the Drummond Group Inc., and the first event to test products against the new eGovernment SAML 2.0 profile v1.5 recently released by Liberty Alliance. Web-based full-matrix testing allows vendors to participate from anywhere in the world and features rigorous processes for ensuring products meet SAML 2.0 interoperability requirements for open, secure and privacy-respecting federated identity management.

“The summer 2009 full-matrix testing event included more vendors than ever before, reflecting the worldwide demand among enterprises and governments for SAML 2.0 identity-enabled solutions that have proven to interoperate,” said Roger Sullivan, president of the Kantara Initiative Board of Trustees, president of Liberty Alliance and vice president, Oracle Identity Management. “Organizations can count on Liberty Interoperable for products that have proven to meet interoperability requirements today and over the long-term as the program moves to expand within Kantara Initiative to test against additional identity standards and protocols.”

This year's program featured enhanced SAML 2.0 testing scenarios between Service Provider (SP) and Identity Provider (IdP). The eGovernment SAML 2.0 profile and its requisite test plan have been developed by Liberty Alliance with input from the Danish, New Zealand and US governments. Testing processes for the eGovernment profile included multiple SP logout scenarios, requested authentication context comparisons, and other aspects of SAML 2.0 necessary to meet interoperability, privacy, security and transparency requirements in the global eGovernment sector. A review of the SAML 2.0 v1.5 eGovernment profile is available here.

“SAML 2.0 is the most popular federation protocol in the industry and utilized by commercial, educational, and government institutions around the globe,” said Gerry Gebel, VP and service director at Burton Group. “Federated single sign-on demand is growing, spurred by broad adoption of SaaS applications and the general increase in collaboration among business partners in every industry. The Liberty Interoperable program is instrumental to sustaining successful deployments in advanced federation scenarios where multiple products are in use.”

During the July 14 – September 4, 2009 testing event, the following products demonstrated interoperability based on a variety of SAML 2.0 conformance modes. A detailed list outlining what each vendor passed is available at http://tinyurl.com/yahs2u8

Entrust — Entrust IdentityGuard Federation Module 9.2 is a part of Entrust's versatile authentication platform, supporting numerous authentication methods in one cost-effective solution. Organizations are empowered to choose the right authentication method(s) for their users accessing enterprise, consumer, government or mobile applications. Entrust IdentityGuard includes support for username & password, IP-geolocation, device-ID, questions and answers, out-of-band OTP soft tokens (via voice, SMS, e-mail), grid and eGrid cards, digital certificates and a range of hardware OTP tokens. Entrust IdentityGuard enables rapid deployment, centralized policy management, and an easy integration into the enterprise. Entrust IdentityGuard also includes the ability to apply transaction digital signatures for increased confidence in online transactions. Entrust IdentityGuard serves as a certified SAML 2.0 identity provider, providing standards-based interoperability to organizations. Combined with Entrust's zero-touch fraud detection solution, Entrust IdentityGuard provides a powerful risk-based solution for authenticating users.

Entrust — Entrust GetAccess 8.0 delivers a single entry and access point for user authentication and authorization across multiple Web portal applications. The solution delivers full service provider (SP) capabilities and provides organizations with security, flexibility and performance to personalize the user experience of a Web portal through the following key services: flexible authentication, including seamless integration with Entrust IdentityGuard for step-up authentication; proven authentication interoperability via standards such as SAML, Kerberos, X.509 and others; SSO to Web and non-Web applications via SAML; authorization including fine-grained access control to online resources; rich policy management capabilities, allowing controlled access based on environmental considerations (e.g. authentication method used, physical location, TOD, external data sources); centralized session management; personalization of content; integration with leading application and portal vendors; web-based tools for business administration and operational control.

IBM — IBM Tivoli® Federated Identity Manager (TFIM) 6.2 provides a full featured web access management solution for managing identity and access to resources that span companies or security domains. Rather than replicate identity and security administration across companies, Tivoli Federated Identity Manager provides a simple, loosely coupled model for managing trusted identities and providing them with access to information and services including SaaS and cloud-based deployments. For companies deploying Service Oriented Architecture (SOA) and Web Services, TFIM provides a centralized identity mediation services for federated Web services identity management across multiple domains (e.g. Java, .NET and mainframe). TFIM supports the following standards: SAML Protocol 1.0/1.1/2.0, OpenID Authentication 1.1/2.0 – OpenID Simple Registration Extension 1.0, Information Card Profile, WS-Federation Passive Requestor Profile, Liberty ID-FF 1.1/1.2, WS-Trust 1.2/1.3.

Microsoft — Microsoft Active Directory Federation Services (AD FS) 2.0 enables Active Directory to be an identity provider in the claims based access platform. AD FS provides end users with a single sign-on experience across applications, platforms and organizations and simplifies identity management for IT Pros. AD FS 2.0 is part of the Windows Server platform, and supports both on-premises and cloud solutions.

Novell — Novell Access Manager 3.1 simplifies and safeguards online asset-sharing, helping customers control access to Web-based and traditional business applications. Trusted users gain secure authentication and access to portals, Web-based content and enterprise applications, while IT administrators gain centralized policy-based management of authentication and access privileges. What's more, Novell Access Manager supports a broad range of platforms and directory services, and it's flexible enough to work in even the most complex multi-vendor computing environments. Novell Access Manager makes administration easy. You can use it to centralize access control for all digital resources, and it eliminates the need for multiple software tools at various locations. One access solution fits all applications and information assets. In addition, Novell Access Manager includes support for major federation standards including Security Assertions Markup Language (SAML), WS-Federation and Liberty Alliance.

Ping Identity — PingFederate v6.1 is an Internet Identity Security platform that delivers an enterprise-class, scalable, cost effective and standards-based software solution for enabling Internet Single Sign-On, Identity-Enabled Web Services and Internet User Account Management. PingFederate provides a centralized platform for managing all of your external identity connections with customers, Software-as-a-Service (SaaS) and Business Process Outsourcing (BPO) providers, partners, affiliates and others. Your organization can have Internet SSO and Identity-Enabled Web Services connections in days with point and click connection configuration, out-of-the-box integration capabilities, multi-protocol support, and automated user account management. Over 350 enterprises and service providers worldwide base their Internet identity security strategy on PingFederate.

SAP — The next release of SAP NetWeaver Identity Management 7.2 is planned for the second quarter 2010. SAP plans to significantly enhance the product with an Identity Provider (IdP) and Secure Token Service (STS) to support web-based Single Sign-On via SAML 2.0 assertions, identity federation and Single Sign-On for web services. The existing features to centrally administrate and provision users — provided by the Identity Center and Virtual Directory Server components — will be extended and allow for integrated scenarios with the IdP. The new IdP and STS will add access management features to the SAP NetWeaver Identity Management and allow the solution to be integrated into an Enterprise Single Sign-On environment reducing TCO and administrative effort.

Siemens — DirX Access V8.1 is a comprehensive solution that integrates access management, entitlement management, identity federation, Web services security, and Web Single Sign-on in one single product to protect your web applications and web services from unauthorized use. DirX Access provides for the consistent enforcement of business security policies through external, centralized, policy-based authentication and authorization services, enhances Web user experience through local and federated single sign-on and supports regulatory compliance with audit and reporting both within and across security domains.

About the Liberty Interoperable Program

The ongoing success of the Liberty Interoperable program is demonstrated by the wide scale deployment of SAML 2.0 products and the increasing number of businesses and governments such as the US GSA, now requiring vendors to pass Liberty Alliance testing. With nearly seven years of testing products for true interoperability of identity specifications, Liberty Alliance expects to expand the Liberty Interoperable program within Kantara Initiative to reflect growing momentum for proven interoperable multi-protocol identity solutions. More information about the program, including a list of all vendors who have passed Liberty Alliance testing, is available here.

Enterprises and governments are going to be able to do important projects and derive tangible benefits very quickly using this cross-vendor family of products. That's really important. Of course, there's more to identity than browser-based federation… But one of the most encouraging signs is that the same kind of progress we see in the Kantara announcement is being made with the user-centric and privacy-enhancing technologies that many of us are working on to complement the SAML technology.

Microsoft: minimum disclosure about minimum disclosure?

Back from vacation and catching up on some blogs I found this piece by Felix Gaehtgens at Kuppinger Cole in Germany:

A good year ago, Microsoft acquired an innovative company called U-Prove. That company, founded by visionary Stephan Brandt, had come up with a privacy-enabling technology that effectively allows users to safely transmit the minimum required information about themselves when required to – and for those receiving the information, a proof that the information is valid. For example: if a country issued a digital identification card, and a service provider would need to check whether the holder over 18 years of age, the technology would allow to do just that – instead of having to transmit a full data set, including the age of birth. The technology works through a complex set of encryption and signing rules and is a win-win for both users who need to provide information as well as those taking it (also called “relying parties in geek speak”). With the acquisition of U-Prove, Microsoft now owns all of the rights to the technology – and more importantly, the associated patents with it. Stephan Brandt is now part of Microsoft’s identity team, filled with top-notch brilliant minds such as Dick Hardt, Ariel Gordon, Mark Wahl, Kim Cameron and numerous others.

Privacy advocates should (and are) happy about this technology because it effectively allows consumers to protect their information, instead of forcing them to give up unnecessary information to transact business. How many times have we needed to give up personal information for some type of service without any real need for this information? For example, if you’re not shipping anything to me… what’s the point of providing my home or address? If you are legally required to verify that I’m over 18 (or 21), why would you really need to know my credit card details and my home address? If you need to know that I am a customer of one of your partner banks, why would you also need to know my bank account number? Minimum disclosure makes transactions possible with exactly the right fit of personal details being exchanged. For those enterprises taking the data, this is also a very positive thing. Instead of having to “coax” unnecessary information out of potential customers, they can instead make a clear case of what information they do require for fulfilling the transaction, and will ultimately find consumers more willing to do business with them.

So all of this is really great. And what’s even better, Microsoft’s chief identity architect, Kim Cameron has promised not to “hoard” this technology for Microsoft’s own products, but to actually contribute it to society in order to make the Internet a better place. But more than one year down the line, Microsoft has not made a single statement about what will happen to U-Prove: minimum disclosure about its minimum disclose technology (pun intended!). In a post that I made a year ago, I tried making the point that this technology is so incredibly important for the future of the Internet, that Microsoft should announce its plans what do with the technology (and the patents associated for it).

Kim’s response was that Microsoft had no intentions of “hoarding” the technology for its own purposes. He highlighted however that it would take time to do this – time for Microsoft’s lawyers, executives and technologists to irk out the details of doing this.

Well – it’s been a year, and the only “minimum disclosure” that we can see is Microsoft’s unwillingness to talk about it. The debate is heating up around the world about different governments’ proposals for electronic passports and ID cards. Combined with the growing dangers of identity theft and continued news about spectacular leaks and thefts of personal information, this would really make our days. Unless you’re a spammer or identity thief of course.

So it’s about time Microsoft started making some statements to reassure all of us what is going to happen with the U-Prove technology, and – more importantly – with the patents. Microsoft has been reinventing itself and making a continuous effort to turn from the “bad guys of identity” a decade (in the old Hailstorm days with Microsoft Passport) into the “good guys” of identity with its open approach to identity and privacy protection and standardisation. At Kuppinger Cole we have loudly applauded the Identity Metasystem and Infocards as a ground-breaking innovation that we believe will transform the way we use the Internet in the years to come. Now is the time to really start off the transformative wave of innovation that comes when we finally address the dire need for privacy protection. Microsoft has the key in its hands, or rather, locked in a drawer. C’mon guys, when will that drawer finally be opened?

Kuppinger Cole has been an important force in creating awareness about the role of an Identity Metasystem. It has also led in stressing the importance of minimal disclosure technology. I take Felix's concerns very seriously. He's right – I owe people a progress report.

This said, there is no locked drawer. Instead, Felix gets closer to the real explanation in his first paragraph: “the technology works through a complex set of encryption and signing rules.”

The complexity must be tamed for the technology to succeed. There is more to this than brilliant formulas or crypto routines. We need to understand not only how minimal disclosure technology can be used – but how it can be made usable.

There are different kinds of research. Theoretical research is hugely important. But applied research is just as key. Over the last year we've moved from an essentially theoretical grasp of the possibilities to prototypes that demonstrate the feasibility of deploying real, large-scale distributed systems based on minimal disclosure.

I don't have much time for standards and protocols that are NOT built on top of experience with implementation. And if you don't know what your standards and implementations might look like, you can't define the intellectual property requirements.

So we've been working hard on figuring this stuff out. In fact, a lot of progress has been made, and I'll write about that in my next few posts. I'll also reach out to anyone who wants to become more closely involved.