Pangloss sent me reeling recently with her statement that “in the wake of the amazing News of the World revelations, there does seem to be some public interest in a quick note on why there is (some) controversy around whether hacking mesages in someone's voicemail is a crime.”

What? Outside Britain I imagine most of us have simply assumed that breaking into peoples’ voicemails MUST be illegal. So Pangloss's excellent summary of the situation – I share just enough to reveal the issues – is a suitable slap in the face of our naivete:

The first relevant provision is RIPA (the Regulation of Investigatory Powers Act 2000) which provides that interception of communications without consent of both ends of the communication , or some other provision like a police warrant is criminal in principle. The complications arise from s 2(2) which provides that:

“….a person intercepts a communication in the course of its transmission by

means of a telecommunication system if, and only if … (he makes) …some or all of the contents of the communication available, while being transmitted, to a person other than the sender or intended recipient of the communication”. [my itals]Section 2(4) states that an “interception of a communication” has also to be “in the course of its transmission” by any public or private telecommunications system. [my itals]

The argument that seems to have been been made to the DPP, Keir Starmer, on October 2010, by QC David Perry, is that voicemail has already been transmitted and is thus therefore no longer “in the course of its transmission.” Therefore a RIPA s 1 interception offence would not stand up. The DPP stressed in a letter to the Guardian in March 2011 that this interpretation was (a) specific to the cases of Goodman and Mulcaire (yes the same Goodman who's just been re-arrested and inded went to jail) and (b) not conclusive as a court would have to rule on it.

We do not know the exact terms of the advice from counsel as (according to advice given to the HC on November 2009) it was delivered in oral form only. There are two possible interpretations of even what we know. One is that messages left on voicemail are “in transmission” till read. Another is that even when they are stored on the voicemail server unread, they have completed transmission, and thus accessing them would not be “interception”.

Very few people I think would view the latter interpretation as plausible, but the former seem to have carried weight with the prosecution authorities. In the case of Milly Dowler, if (as seems likely) voicemails were hacked after she was already deceased, there may have been messages unread and so a prosecution would be appropriate on RIPA without worrying about the advice from counsel. In many other cases eg involving celebrities though, hacking may have been of already-listened- to voicemails. What is the law there?

When does a message to voicemail cease to be “in the course of transmission”? Chris Pounder pointed out in April 2011 that we also have to look at s 2(7) of RIPA which says

” (7)For the purposes of this section the times while a communication is being transmitted by means of a telecommunication system shall be taken to include any time when the system by means of which the communication is being, or has been, transmitted is used for storing it in a manner that enables the intended recipient to collect it or otherwise to have access to it.”

A common sense interpretation of this, it seems to me (and to Chris Pounder ) would be that messages stored on voicemail are deemed to remain “in the course of transmission” and hence capable of generating a criminal offence, when hacked – because it is being stored on the system for later access (which might include re-listening to already played messages).

This rather thoroughly seems to contradict the well known interpretation offered during the debates in the HL over RIPA from L Bassam, that the analogy of transmission of a voice message or email was to a letter being delievered to a house. There, transmission ended when the letter hit the doormat.

Fascinating issues. And that's just the beginning. For the full story, continue here.

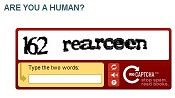

Given that SocialBots will inevitably and quickly evolve, we can see that the ability to demonstrate that you are a natural flesh-and-blood person rather than a robot will increasingly become an essential ingredient of digital reality. It will be crucial that such a proof can be given without requiring you to identify yourself, relinquish your anonymity, or spend your whole life completing grueling captcha challenges.

Given that SocialBots will inevitably and quickly evolve, we can see that the ability to demonstrate that you are a natural flesh-and-blood person rather than a robot will increasingly become an essential ingredient of digital reality. It will be crucial that such a proof can be given without requiring you to identify yourself, relinquish your anonymity, or spend your whole life completing grueling captcha challenges.