As you can imagine, over the years I've answered plenty of questions about CardSpace and Information Cards. In fact, the questions have been instrumental in shaping the theory and the implementation. To help put together a definitive set of questions and answers, I'm going to share them on my blog. I invite you to submit further questions and comments. You can post directly by using an InfoCard, post on your own blog with a link back, or write to me using my i-name.

Would banks ever accept self-issued information cards? How could they trust the information on them to be true?

In fact a number of banks have expressed interest in accepting self-issued cards (protected by pins) at their on-line banking sites.

They see self-issued cards as a simple but improved credential when compared to a password. Because a self-issued card is based on public key technology, the user never sees a secret that can be phished. The self-issued card uses a 2048 bit RSA key when authenticating to a site – and there is no key-distribution problem.

These banks would not request or depend on the card's informational claims (name, address, etc). The banks have already vetted the customer through their Know Your Customer (KYC) procedures. So it is just the crypto that is of interest.

There are also banking sites that are more interested in issuing their own “managed cards” – for branding reasons, and as a way to provide their customers with single-signon to a constellation of services operated in unrelated data centers.

Finally, some banks are interested in using managed cards as a payment instrument within specific communities (for example, high value transactions), and as a way to get into new identity-related businesses.

How can managed cards ever help identity providers prevent phising, if all the end user has is a password?

Once a user switches to CardSpace, phishing is not possible even when passwords are used as an IdP Credential.

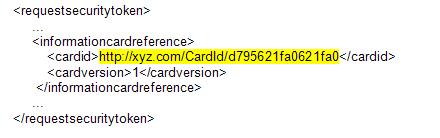

That is because an Information Card reference is included as a part of the “Request Security Token†message sent to the IDP. It may include a second secret in its CardId, never released except encrypted to a certificate specified in the card's metadata. For example:

Even if the user is tricked into leaking her password, she doesn’t know the CardId and can’t leak it. If the IdP verifies that the correct CardId is present in the Request Security Token message (as well as the username and password), it is impossible for an attacker to phish the user.

Why can't you use smart cards, dongles, and one-time password devices with CardSpace?

You can. Using a password is only one option for accessing IdPs. CardSpace currently supports four authentication methods:

- Kerberos (as supported on *NIX systems and Active Directory): This is typically useful when accessing an IdP from inside a firewall.

- X.509: This allows conventional dongles, smart cards and soft certs to be used. Further since many devices (such as biometric sensors) integrate with windows by emulating an X.509 device, it supports these other authentication methods as well.

- Self-Issued Card: In other words, the RSA keys present in one of your self-issued cards can be used to create a SAML token.

- Username / password: The password can be generated by an OTP device if the IdP supports it, and this is an extremely safe option.