The UK's Register has been running a a series of articles by John Leyden (here, here and here) about Verified By Visa. (VByV) Verified By Visa uses the same kind of “site redirection” I've written about many times with respect to OpenID and other password-based federation technologies – but in this case it is a banking password that can be stolen.

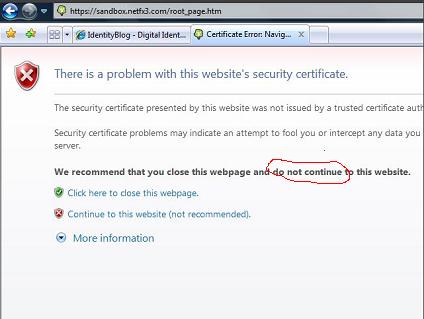

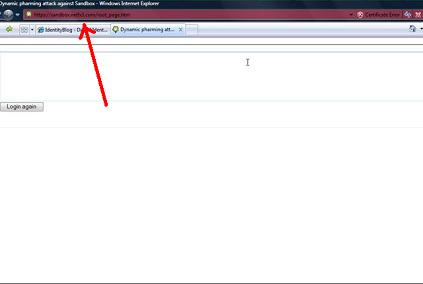

The phishing scenario is simple enough. If you happen onto an “evil” site and are tricked into purchasing something, it can “misdirect” your browser to a counterfeit VByV signon page. As John explains, you have little chance, as a user, of knowing you are being duped, but once you enter your password it is available to the evil site for both instant use an future reuse. Those familiar with this site will understand that this is yet another example of an attack that cannot be made against Information Card users.

Beyond focussing attention on the phishing problems inherent in “site redirection” approaches, John argues that the system – though claiming to be more secure – is actually just as vulnerable as non-VByV mechanisms. He then argues – and I have know knowledge as to whether this is the case – that the false claims about increased security are being used to reject complaints by end-users about irregularities and fraudulent purchases made in their name. If that were true, it would be scandalous.

Friends, this is a case of “The Writing on the Wall”. I think people in the industry should see John's work as a sign of what's to come. He is the guy in the fable who is shouting out that “the Emperor has no clothes!” And he's doing it cogently to the wide readership of the Register.

If I were an advisor to the emperor at this point I would insist on two things:

- admit the vulnerability of all systems based on “site redirection”; and

- start getting into phishing-resistant technologies like Information Cards while one's modesty can still be protected.

John makes his points without the stench of jargon. In spite of this, North American readers will require a dictionary to follow what he's saying (I did). I'm talking here about a dictionary of British idioms (thanks to my friend Richard Turner for boosting my vocabulary on this one) :

punter n guy. A punter is usually a customer of some sort (the word originally meant someone who was placing bets at a racecourse)…

To see a bit of what mainstream press worldwide will be writing about as the paucity of redirection technology for long-tail scenarios is concerned, I do suggest looking first hand at these articles. One small taste:

Both Verified by Visa (VbyV) and MasterCard's equivalent SecureCode service are marketed as offering extra security checks to online purchases. Importantly, the schemes also transfer liability for bogus transactions away from merchants who use the system back towards banks (and perhaps ordinary e-commerce punters).

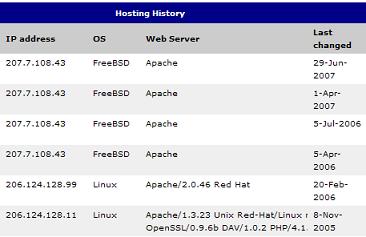

Online shoppers who buy goods and service with participating retailers are asked to submit a VbyV or SecureCode password to authorise transactions. These additional checks are typically submitted via a website affiliated to a card-issuing bank but with no obvious connection to a user's bank.

Punters aren't informed up front that a merchant has signed up to Verified by Visa. Sites used to authenticate a VbyV or SecureCode password routinely deliver a dialogue box using a pop-up window or inline frame, making it difficult to detect whether or not a site is genuine.

The appearance of phishing attacks hunting for Verified by Visa passwords are among the reasons some punters are wary of the technology.

Once obtained by fraudsters, either by direct phishing attack or through other more subtle forms of social engineering trickery, VbyV login credentials make it easier for crooks to make purchases online while simultaneously making it harder for consumers to deny responsibility for a fraudulent transaction…

The little-publicised mandatory use of the technology by some banks means that those with reservations have an uphill struggle to opt out of the scheme…

Verified by Visa and Mastercard SecureCode are there purely to protect the banks, not the card holder. They offer zero additional protection to the consumer, but allow the bank to claim that transactions using purloined credit card credentials were really made by the card holder. It is as simple as that.

[More here}.