Here's some feedback on Rubinstein and Daemen's new Metasystem Privacy paper posted by Urs Gasser on his Law and Information blog. Urs is an expert in cyber law associated with the Berkman Center at Harvard Law School.

Microsoft released a white paper entitled “The Identity Metasystem: Towards a Privacy-Compliant Solution to the Challenges of Digital Identity.†The excellent paper, authored by Microsoft’s Internet Policy Council Ira Rubinstein and Tom Daemen, senior attorney with Microsoft, and posted on Kim Cameron’s blog, is a must-read for everyone interested in user-centric ID management systems. (Disclosure: As you can take from the acknowledgments, I have commented on a draft version of the paper, based on my earlier observations on “Identity 2.0â€-like initiatives.)

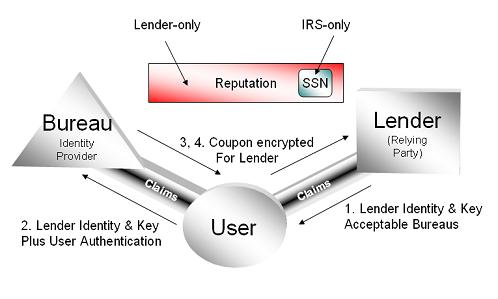

Among my main concerns – check here for other problem areas – has been Microsoft’s claim that the i-card model is “by design†in compliance with the unambiguous and informed consent requirement as set forth, for instance, by EU data protection law. I’ve argued that the “hardwiredâ€-argument (obviously a variation on the theme “regulation by codeâ€) might be sound if one focuses on a particular relationship between one user and one identify provider and/or one relying party – as the white paper does. However, at the aggregated level, the i-card model’s complexity – i.e. the network of informational relationships between one user and multiple ID providers and relying parties – increases dramatically. If we were serious about the informed consent requirement, so my argument goes, one would wish that the user could anticipate not only the consequences of consent vis-à -vis one ID provider, but would understand he interplay among all the components of the ID-system. Even in less complex informational environments, experience has shown that the making available of various privacy policies can’t be the answer to this problem – as the white paper seems to acknowledge.

In this regard, I particularly sympathize with the white paper’s footnote 23. It might indeed be a starting point for an answer to what we might call the “transparency challenge†to create “a system enabling web sites to represent privacy policies in a simple, iconic fashion analogous to food labels. This would allow consumers to see at a glance how a site’s practices compared to those of other Web sites using a small number of universally accepted visual icons that were both secure against spoofing and verified by a trusted third party.†(p. 19, FN 23.) Such a system could become particularly effective if the icons – machine-readable analogous to creative commons labels – would be integrated in search results and monitored by “Neighborhood campaigns†similar, for instance, to Stopbadware.com.

Although Microsoft’s paper leaves some important issues unadressed, it seems plain to me that it takes the discussion on identity and privacy protections as code and policy an important step further – in a sensible and practical manner.

I agree with Urs when he talks about where we can go with visual icons representing the practices and policies of sites and identity providers. Let's do it.

Just to be clear, I see Information Card technology as providing a platform for people to control their digital identity. As a platform, it leaves people the freedom to put things of their choice onto that platform.

Let's make an analogy with some other technology – say plasma screens. The technologists can produce a screen with fantastic resolution, but people can still use it to view blurry, distorted signals if they want to. But once people see the crsytal clarity of high definition, they move away from the inferior uses. Even so, there still might be artifacts that are important historically that they want to watch in spite of their resolution.

In the same way, people can use the Information Card technology to host identity providers with different characteristics. It's a platform. And my belief is that a high fidelity and transparent identity platform will lead to uses that respect our rights. If this requires help from legislators and the policy community, that's just part of the process. In other words, I don't think CardSpace is the magic bullet that solves all privacy problems. But it is an important step forward to have a platform finally allowing them to be solved.

Once you let one party send information to another party, there is no way to prevent it – technically – from sending a correlating identifier. As a morbid example, terrorists have been known to communicate by depositing and withdrawing money from bank accounts. The changes in the account are linked to a codebook. So any given information field can be used to communicate unrelated information.

What you can do is prevent the platform itself from creating correlation handles or doing things without a user's knowledge. You can use policy, legal frameworks and market forces so providers and consumers of identity are transparent about what they are doing. You can create technology that can help discover and prove breaches of transparency. You can facilitate holding third parties to their promises. And you can put in place social and legal protections of technology users, along the lines of the privacy-embedded laws of identity.

That's why I see the contributions of legal and policy experts as being just as fundamental as the contribution of technologists in solving identity problems. In in the long term, the social issues may well be more important than the technical ones. But the success of the technology is what will make it possible for people to understand and discuss those issues.

I advise following some of the thoughtful links to which Urs refers.