I need to correct a few of the factual errors in recent posts by James Governor and Jon Udell. James begins by describing our recent get-together:

We talked about Project Geneva, a new claims based access platform which supersedes Active Directory Federation Services, adding support for SAML 2.0 and even the open source web authentication protocol OpenID.

Geneva is big news for OpenID. As David Recordon, one of the prime movers behind the standard said on Twitter yesterday:

Microsoft’s Live ID is adding support for OpenID. Goodbye proprietary identity technologies for the web! Good work MSFT

TechCrunch took the story forward, calling out de facto standardization:

Login standard OpenID has gotten a huge boost today from Microsoft, as the company has announced that users will soon be able to login to any OpenID site using their Windows Live IDs. With over 400 million Windows Live accounts (many of which see frequent use on the Live’s Mail and Messenger services), the announcement is a massive win for OpenID. And Microsoft isn’t just supporting OpenID – the announcement goes as far as to call it the de facto login standard [the announcement actually calls it “an emerging, de facto standard” – Kim]

But that’s not what this post is supposed to be about. No I am talking about the fact [that] later yesterday evening Kim hacked his way into a party at the standard using someone else’s token! [Now this is where I think some “small tweaks” start to be called for… – Kim]

It happened like this. I was talking to Mary Branscombe, Simon Bisson and John Udell when suddenly Mary jumped up with a big smile on her face. Kim, who has a kind of friendly bear look about him, had arrived. She ran over and then I noticed that a bouncer had his arm across Kim’s chest (”if your name’s not down you’re not coming in”). Kim had apparently wandered upstairs without getting his wristband first. Kim disappeared off downstairs, and I figured he might not even come back. A few minutes later though and there he was. I assumed he had found an organizer downstairs to give him a wristband… When he said that he actually had taken the wristband from someone leaving the party, and hooked it onto his wrist me and John practically pissed our pants laughing. As Jon explains (in Kim Cameron's Excellent Adventure):

If you don’t know who Kim is, what’s cosmically funny here is that he’s the architect for Microsoft’s identity system and one of the planet’s leading authorities on identity tokens and access control.

We stood around for a while, laughing and wondering if Kim would reappear or just call it a night. Then he emerged from the elevator, wearing a wristband which — wait for it — belonged to John Fontana. Kim hacked his way into the party with a forged credential! You can’t make this stuff up!

While there is certainly some cosmic truth to this description, and while I did in fact back away slightly from the raucus party at the precise moment James says he and Jon “pissed their pants”, John Fontana did NOT actually give me his wristband. You see, he didn't have a wristband either.

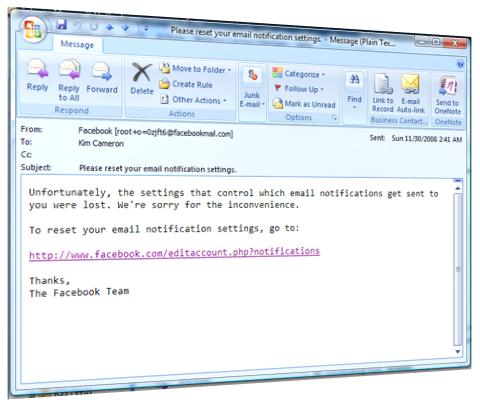

So let's go through this step by step. It all began with the invite that brought me to the party in the first place:

As a spokesperson for PDC2008, we’re looking forward to having you join us at the Rooftop Bar of the Standard Hotel for the Media/Analyst party on October 27th at 7:00pm

This invite came directly from the corporate Department of Parties.

I point this out just to ward off any unfair accusations that I just wanted to raid the party's immense Martini bar. Those who know me also know nothing could be further from the truth. You have to force a Martini into my hands. My attendance represented nothing but Duty. But I digress.

Protocol Violation

The truth of the matter is that I ran into John Fontana in the cafe of the Standard and we arrived at the party together. He had been invited because this was, ummm, a Press party and he was, ummm, Press.

However, it didn’t take more than a few seconds for us to see that the protocol for party access control had not been implemented correctly. We just assumed this was a bug due to the fact that the party was celebrating a Beta, and that we would have to work our way past it as all beta participants do.

Let’s just say the token-issuing part of the party infrastructure had crashed, whereas the access control point was operating in an out-of-control fashion.

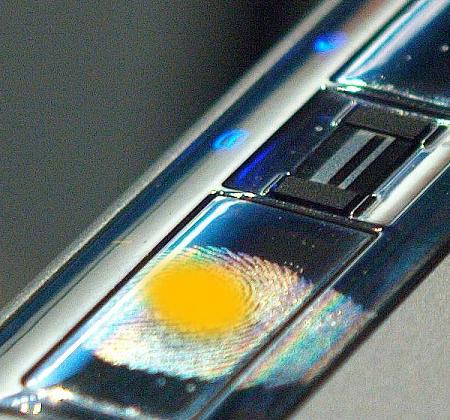

Looking at it from an architectural point of view, the admission system was based on what is technically called “bearer” tokens (wristbands). Such tokens are NOT actually personalized in any way, or tied to the identity of the person they are given to through some kind of proof. If you “have” the token, you ARE the bearer of the token.

So one of those big ideas slowly began to take root in our minds. Why not become bearers of the requisite tokens, thereby compensating for the inoperative token-issuing system?

Well, at that point, since not a few of the people leaving the party knew us, John and I explained our “aha”, and pointed out the moribund token-issuing component. As is typical of people seeing those in need of help, we were showered with offers of assistance.

I happened to be rescued by an unknown bystander with incredibly nimble and strong fingers and deep expertise with wristband technology. She was able to easily dislodge her wristband and put it on me in such a way that it’s integrity was totally intact.

There was no forged token. There was no stolen token. It was a real token. I just became the bearer.

When we got back upstairs, the access control point evaluated my token – and presto – let me in to join a certain set of regaling hedonists basking in the moonlight.

But sadly – and unfairly – John’s token was rejected since its donor, lacking the great skill of mine, had damaged it during the token transplant.

Despite the Martini now in my hand, I was overcome by that special sadness you feel when escaping ill fate wrongly allotted to one more deserving of good fortune than you. John slipped silently out of the queue and slinked off to a completely different party.

So that's it, folks. Yet the next morning, I had to wake up, and confont again my humdrum life. But I do so inspired by the kindness of both strangers and friends (have I gone too far?)